You can convert a transformer-based LLM checkpoint to ONNX format for deployment using Hugging Face's transformers and onnx utilities for efficient inference.

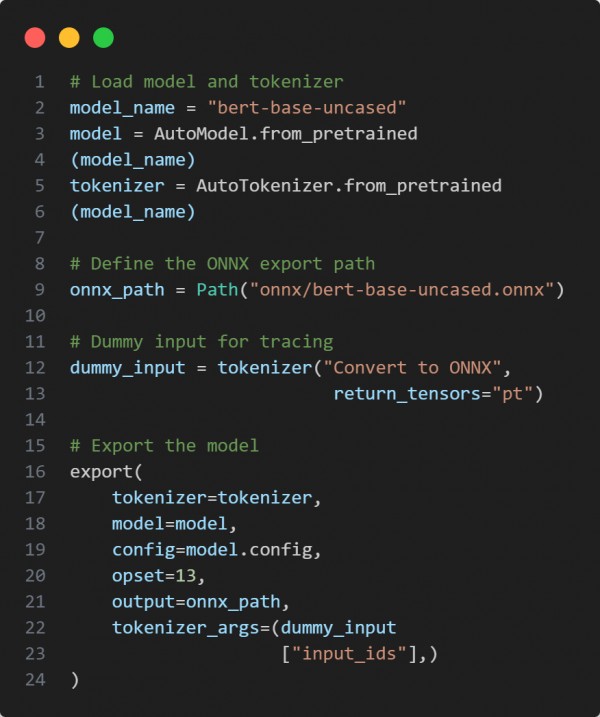

Here is the code snippet below:

In the above code we are using the following key points:

-

Loading a pretrained model and tokenizer from Hugging Face.

-

Creating a dummy input to simulate model input shape for ONNX tracing.

-

Using transformers.onnx.export to handle the ONNX conversion process.

Hence, this approach simplifies the transformation of transformer checkpoints into ONNX for optimized, hardware-agnostic deployment.

REGISTER FOR FREE WEBINAR

X

REGISTER FOR FREE WEBINAR

X

Thank you for registering

Join Edureka Meetup community for 100+ Free Webinars each month

JOIN MEETUP GROUP

Thank you for registering

Join Edureka Meetup community for 100+ Free Webinars each month

JOIN MEETUP GROUP