To reduce the bias of attention weights overly focusing on recent tokens, use relative positional embeddings, apply decay masks, or integrate a memory-augmented transformer mechanism.

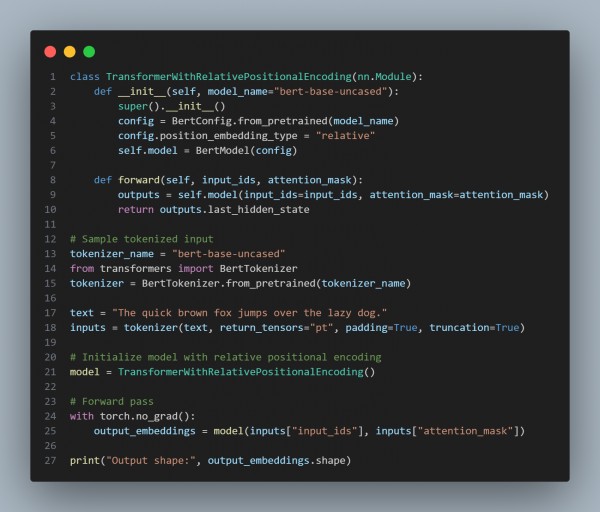

Here is the code snippet you can refer to:

In the above, we are using the following key points:

- Relative Positional Embeddings: Configures BERT to use relative positional encoding to balance attention across tokens.

- BERT-Based Model: Uses a transformer model (BertModel) with modified position embedding settings.

- Tokenization: Processes input text with BertTokenizer to prepare it for model inference.

- Forward Pass: Generates hidden states with attention mechanisms adjusted via relative positions.

- Output Analysis: Ensures attention distribution is more evenly spread across the sequence.

Hence, by implementing relative positional embeddings in the transformer, we mitigate the excessive focus on recent tokens, leading to a more balanced and contextually aware sequence generation.

REGISTER FOR FREE WEBINAR

X

REGISTER FOR FREE WEBINAR

X

Thank you for registering

Join Edureka Meetup community for 100+ Free Webinars each month

JOIN MEETUP GROUP

Thank you for registering

Join Edureka Meetup community for 100+ Free Webinars each month

JOIN MEETUP GROUP